Phishing has always been a numbers game, but artificial intelligence (AI) is changing the rules. Fraudsters now use AI to craft more convincing and human-like scams at scale.

Not long ago, spotting a phishing email was easier. Spelling errors and awkward grammar often gave scams away. AI has removed some of these obvious red flags. Fraudsters now use generative AI models to produce highly personalized scams. In its Q3 2025 threat report, the cybersecurity company Gen Digital, owner of Norton and Avast, recorded over 140,000 AI-generated phishing websites mimicking real brands. The same report found that data breaches rose 82% quarter-over-quarter, with 83% exposing passwords. In short, AI has dramatically increased the speed and scale of phishing campaigns.

In late 2025, the FBI reported receiving more than 5,100 account takeover (ATO) complaints since January 2025, totaling over $262 million in losses. This figure only reflects U.S. cases that were reported; the actual global impact is almost certainly much higher.

Phishing remains the top entry point for breaches. AI allows fraudsters to launch more phishing attempts across multiple channels, and the increase in volume has led to more successful attacks.

With phishing and account takeover incidents rising, one might ask: are existing defenses enough? Employee security training and spam filters help, but AI makes it harder for humans and automated systems to distinguish real messages from fake ones.

Why enterprise accounts are targeted and how breaches escalate

Corporate accounts and data are highly valuable, so attackers are motivated to infiltrate enterprise environments. AI helps not only with the initial phishing attempt but also during the post-compromise phase. Once an attacker gains access, they can use AI to sift through stolen emails and data more efficiently.

A single compromised employee email account can trigger a large-scale breach. An attacker may phish a user and steal their Microsoft Office 365 or Google Workspace credentials. With an authenticated session, the attacker can impersonate the user internally and phish other employees. Using a legitimate-looking internal email address, the attacker can move laterally, targeting accounts with greater access privileges and expanding the breach.

In many cases, the ultimate goal is to steal sensitive data or trick someone into wiring money. AI makes this easier by crafting highly believable emails. For example, an attacker could generate a message from a senior manager to a junior staffer that mimics the manager’s writing style and references ongoing projects.

Every workforce account is now a potential entry point. Security teams must focus on shoring up identity defenses.

Layered identity verification as a defense

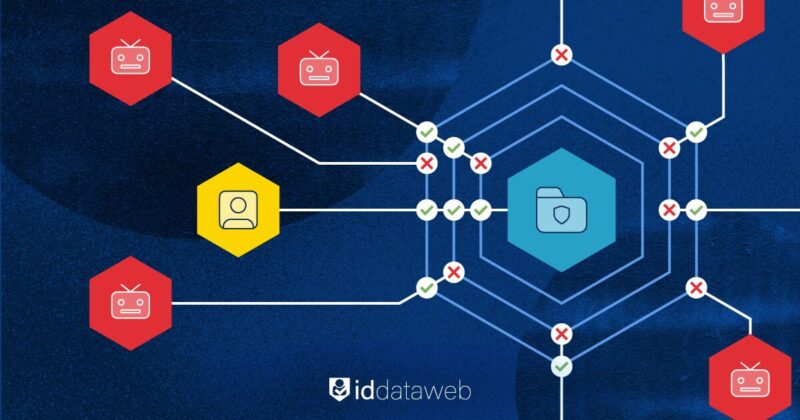

To counter AI-enhanced phishing, organizations must update their cybersecurity strategies. This involves adopting multi-layered, identity-centric defenses. Instead of relying solely on static protections such as passwords, email filters, or two-factor authentication, organizations should combine multiple independent checks. If one fails, another should catch the intruder. The goal is to force attackers to overcome multiple hurdles, especially after compromising an employee account.

Examples of adaptive defenses include phishing-resistant multi-factor authentication and risk-based, step-up authentication. Phishing-resistant methods include FIDO2 security keys and mobile push prompts, which are harder to intercept than SMS codes. Risk-based authentication layers behavioral analytics and device intelligence on top of standard login processes. With these defenses, even if credentials are compromised through phishing, a subsequent login attempt can be flagged due to unusual device, location, or behavioral risk signals.

ID Dataweb, for example, combines telecom data with network characteristics, device attributes, and more to assess each login for risk. Even if an attacker bypasses two layers of authentication by stealing credentials and intercepting an SMS code, access can still be denied by detecting threat signals such as a SIM-swapped phone number or a device exhibiting suspicious activity elsewhere.

Layered defenses do not rely solely on users to detect phishing. They assume one layer may fail. If someone clicks a malicious link or is tricked, the next layer must catch the attack. This approach shifts the responsibility from individuals making perfect security decisions to the system being robust against breaches.

Conclusion

AI has amplified phishing and account takeover risk. It lowers the barrier to entry for cybercriminals while reducing the effectiveness of traditional protections.

Organizations must assess whether they are prepared for AI-powered attacks. This includes updating phishing training to address deepfakes and AI-generated content. It also means investing in solutions that evaluate context and intelligence, rather than relying solely on static rules.

Digital trust must be earned through multiple layers of verification. Adaptive, multi-layer defenses make it significantly harder for AI-assisted fraud to succeed.